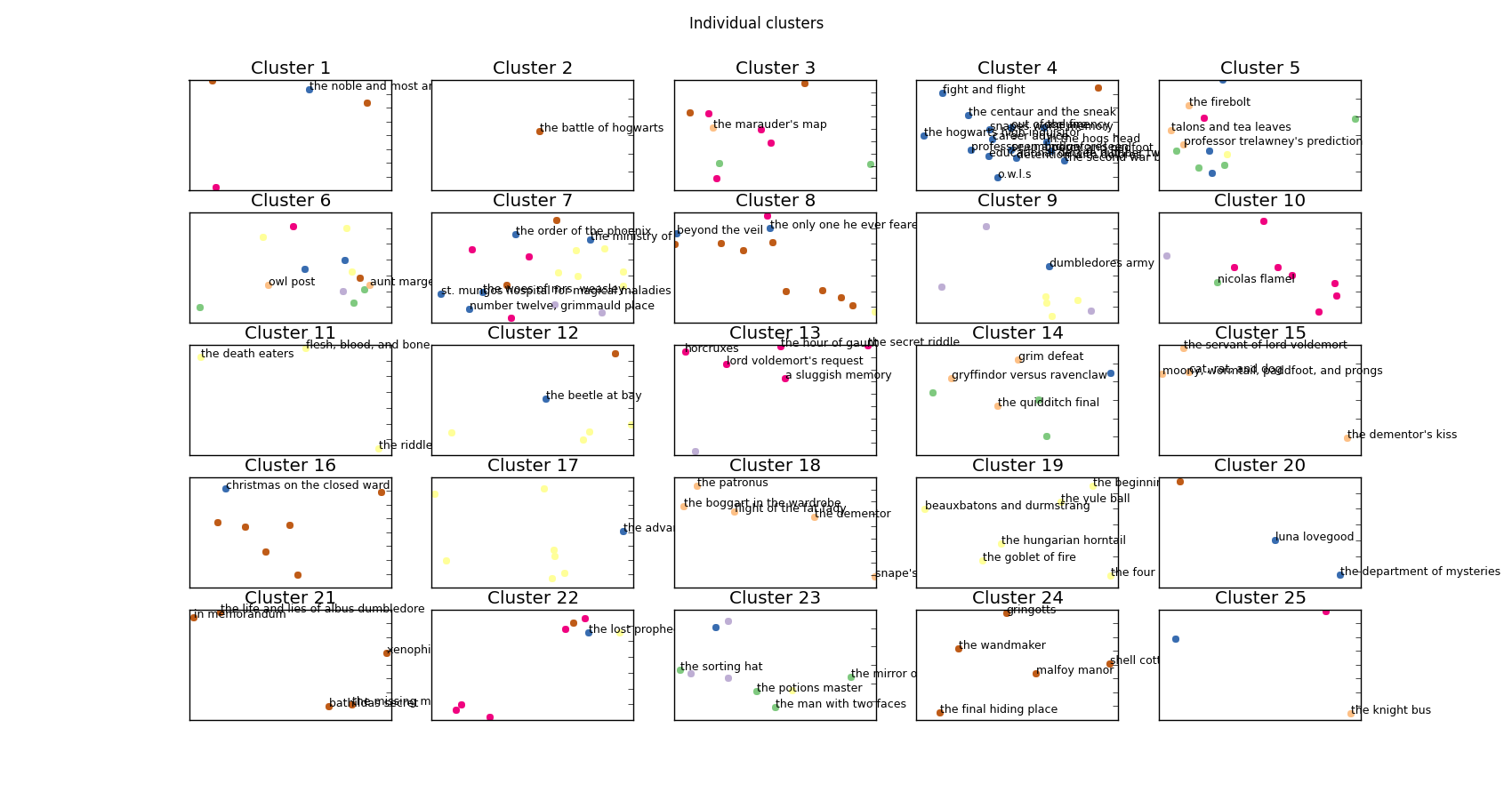

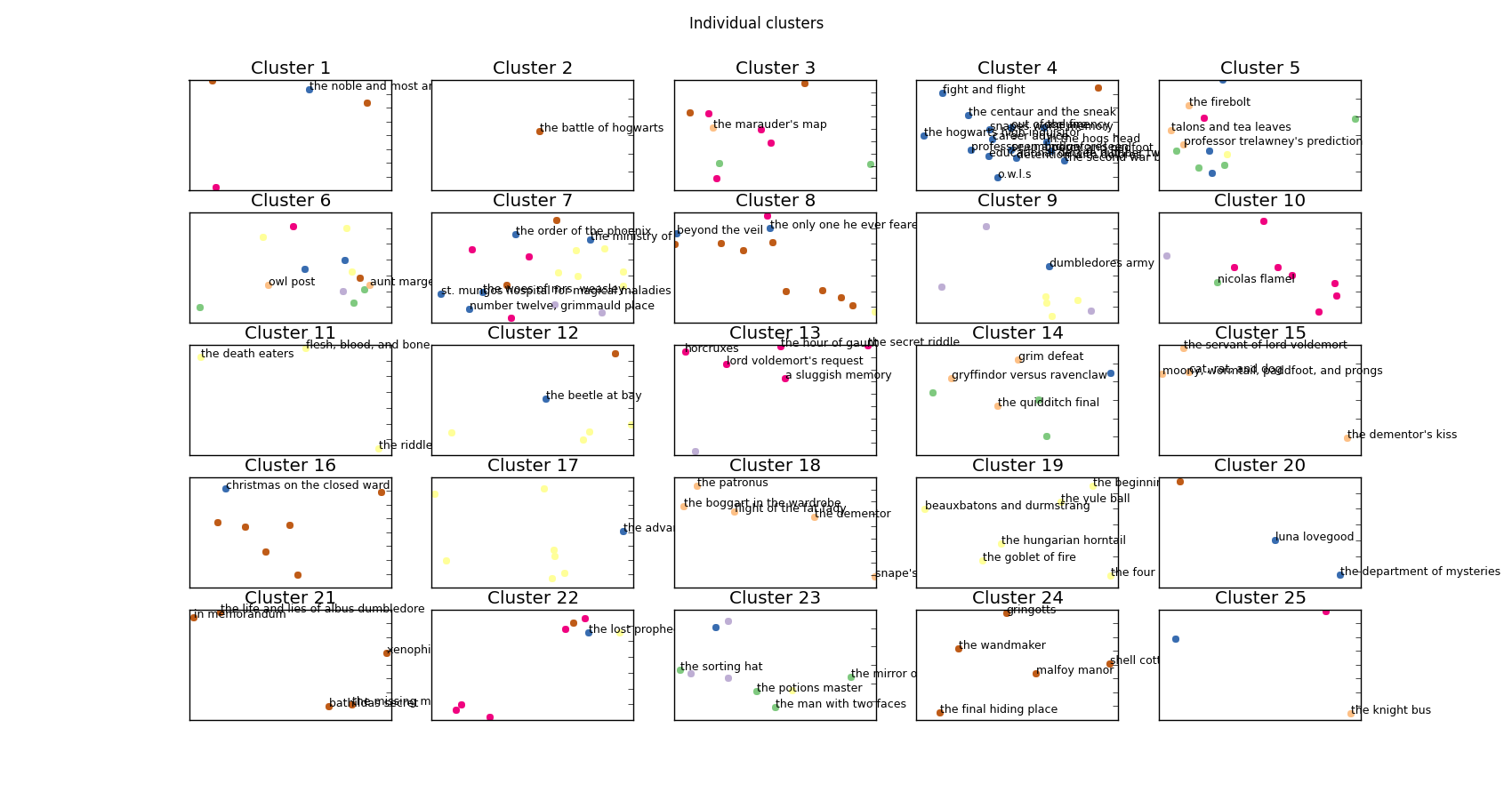

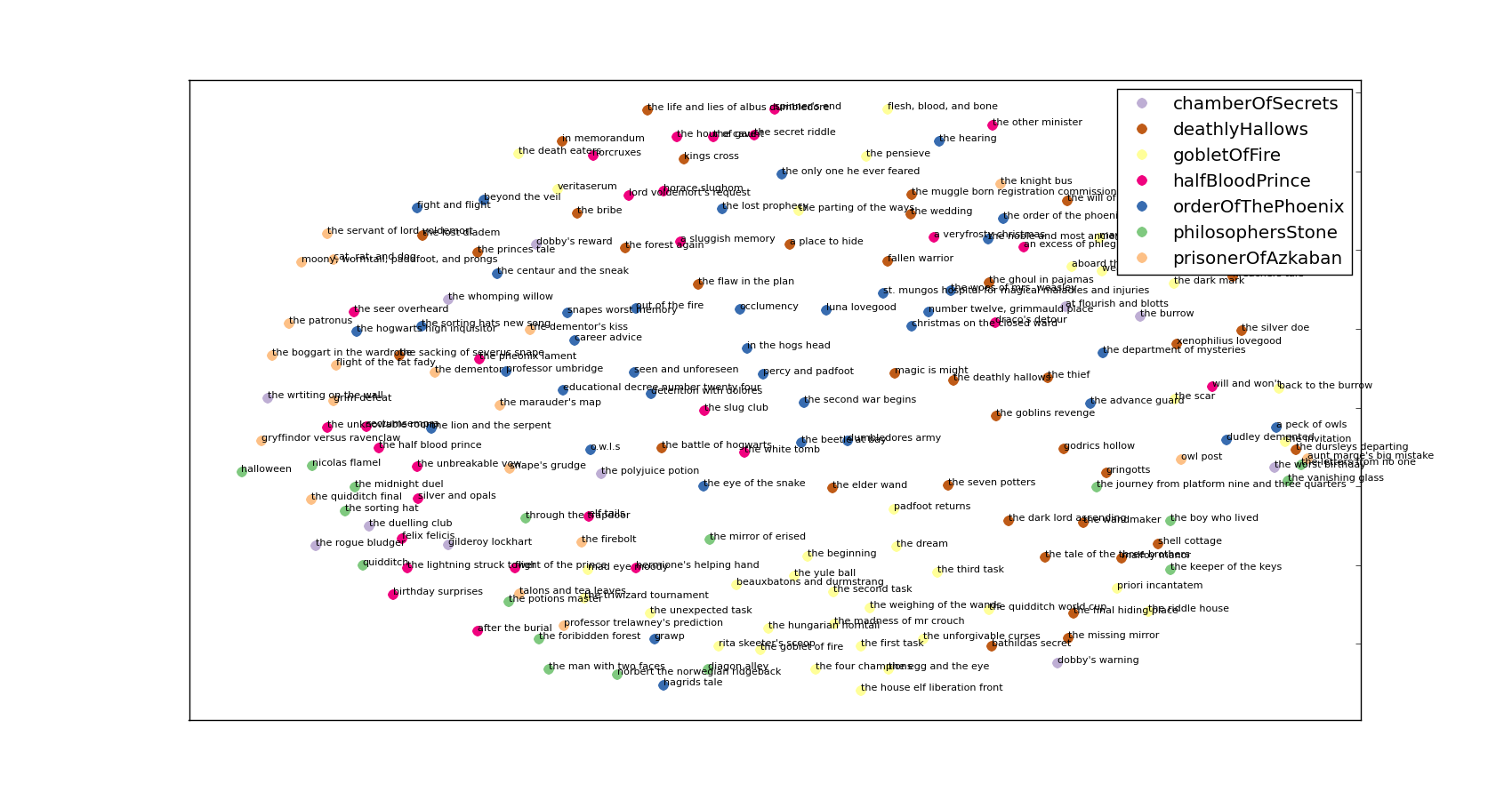

I’ll open up with the money-shot – these are all of the clusters that I was able to find using the whole Harry Potter and grouping by chapter:

Every cluster plotted separately.

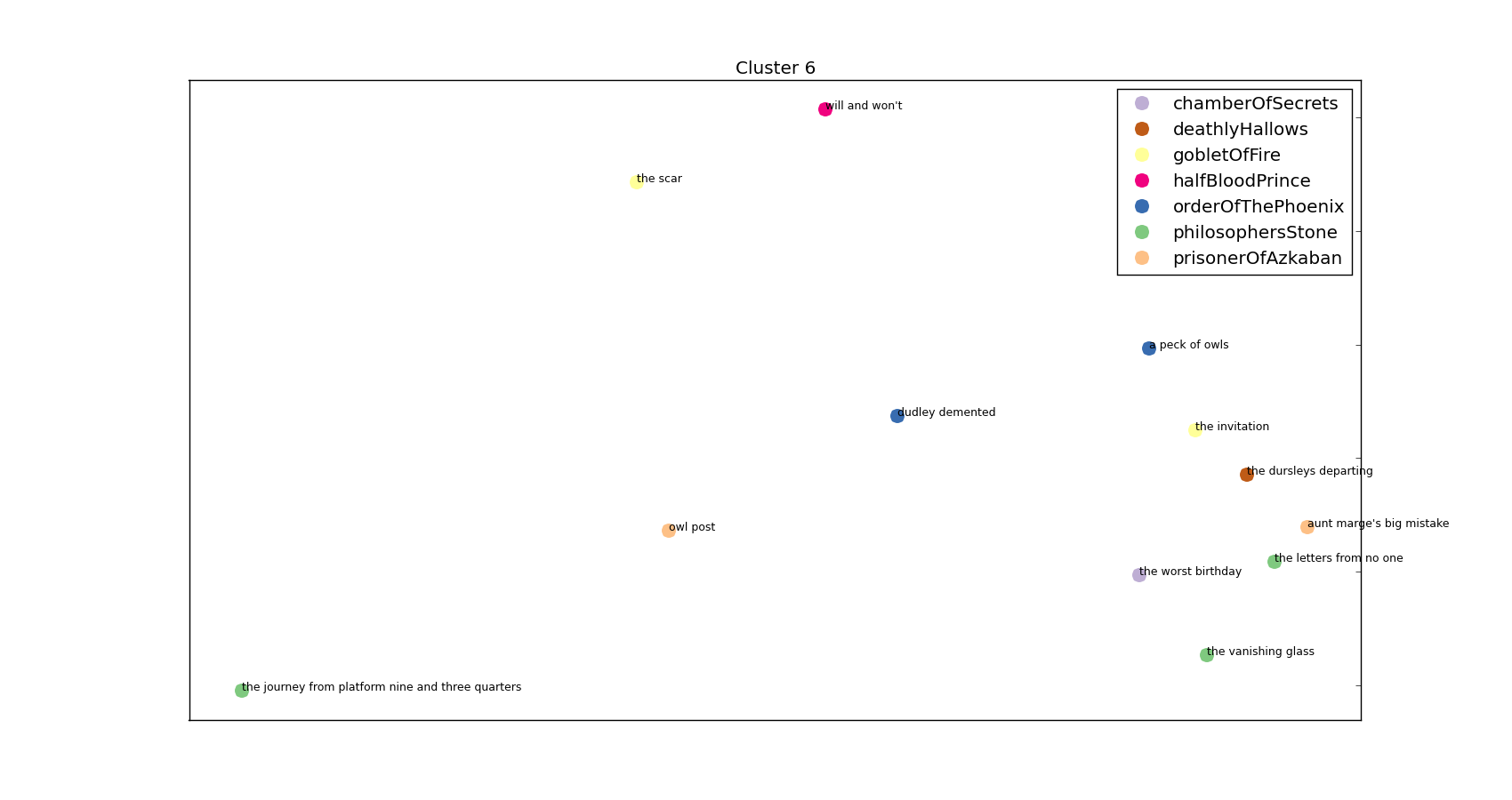

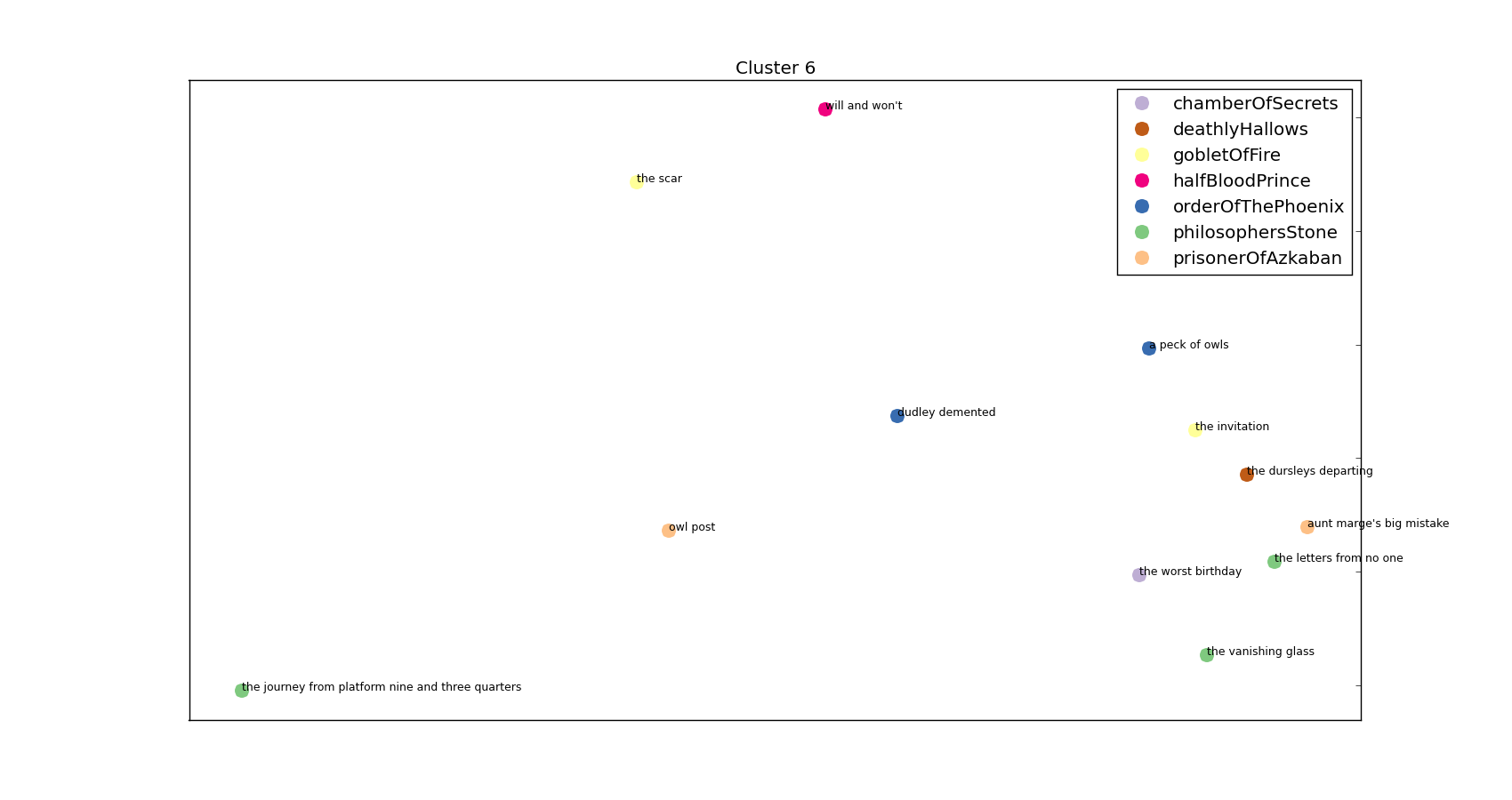

That’s far too messy to be of any practical use so let’s have a look at a couple of those clusters in more detail:

One of the clusters – a Dursley/Privet Drive heavy cluster!

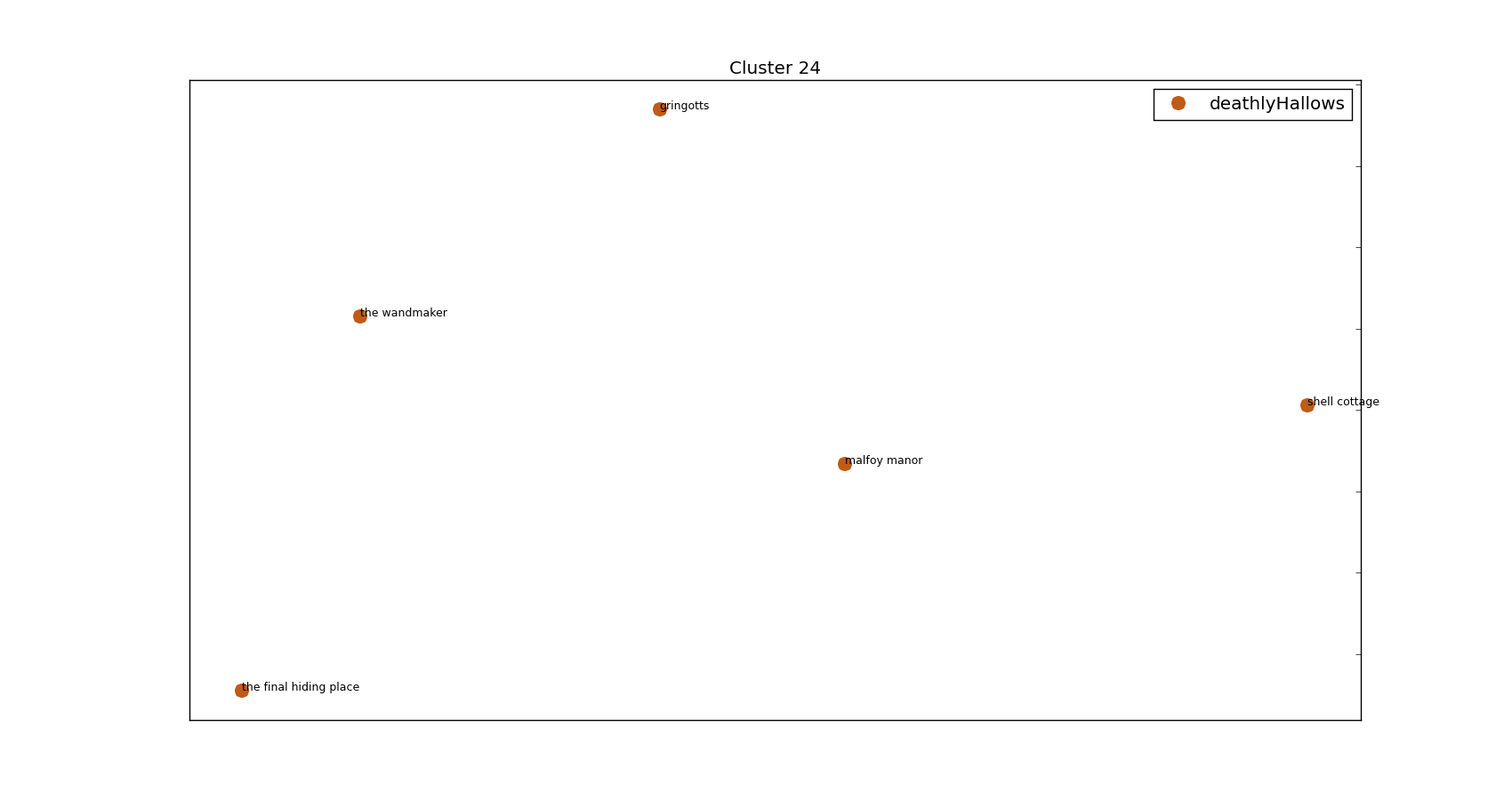

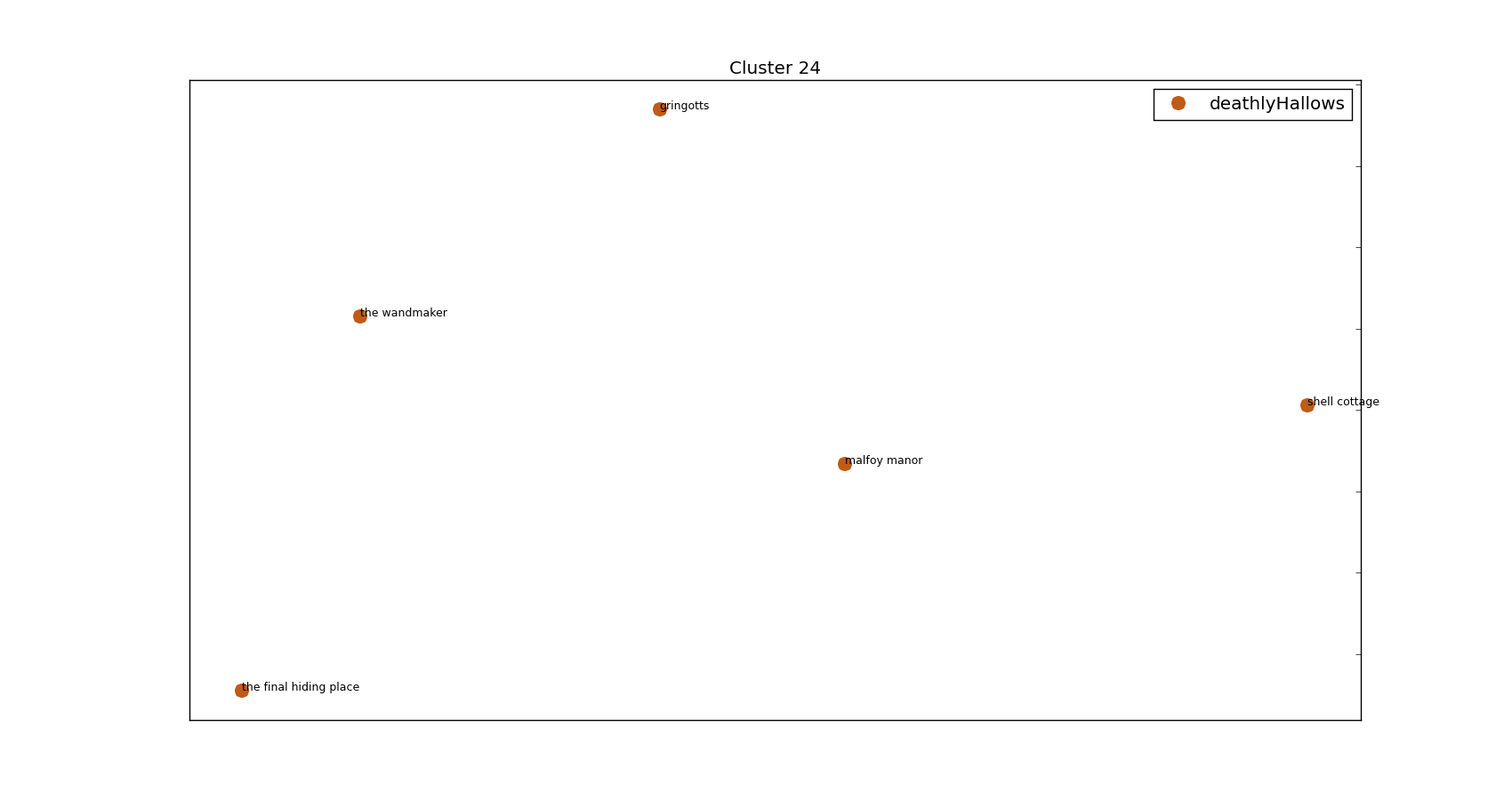

and

This is a pretty Griphook/Goblin heavy cluster featured on the storyline in book 7.

Hopefully that’s piqued your interest enough to continue on scrolling and see how we got these clusters – and see the words that tie them all together! The code for generating these is on my Github (https://github.com/Kali89/HarryPotterClusters) and all the graphs and documents are contained there.

I went to a really interesting talk at PyData that was about Latent Dirichlet Allocation, a topic entirely new to me. I thought I’d love to apply it to my favourite book series – Harry Potter. However, that didn’t happen…instead you get this. A heavy rip off of an excellent post (http://brandonrose.org/clustering) that walks through how to cluster documents using a bunch of techniques including K-Means.

Step 1

Get plain text copies of all the Harry Potter books and make sure they’re all formatted in roughly the same way. As is often the case, this step took bloody ages.

Step 2

I want a few different documents – more than 7 (the number of Harry Potter books for the heathens out there) but substantially less than the number of sentences in all 7 books. Treating each chapter as a separate document seemed to make most sense and so here I initialise everything I need and split my books into chapter.

So at this point we’ve nicely got ourselves a list of chapter titles and a list of the associated text (as a string).

Step 3

Now we’ve got our chapters we’re going to want to tokenise the text in them. Basically this means converting a string into discrete tokens – what we’d think of as words. The reason it’s got a fancy name and I’m being a bit careful about my terminology is because tokenisation also takes care of things like punctuation and isn’t as simple as just splitting a string into separate words. Having said that, I’ve basically taken the path of least resistance and so have gone with a very simple tokenisation scheme. I’m also going to skip over the pain of utf-8 encoding/decoding/recoding. I’ve basically just dropped any character that I’ve found in the least bit complicated.

Step 4

Next I’m going to perform TF-IDF on my chapters. Here, I convert each token into a number and look at how many times that token appears in a given document, and how many documents that token appears in overall. So in this instance I’m looking to see how often a given word appears in this particular chapter and in how many chapters throughout all 7 books the token appears in. This gives us an idea of how important/prominent a word is in a given chapter, taking into account how common the word is throughout all the chapters. As an example ‘Harry’ is likely to feature a lot in a given chapter but also is likely to feature in every chapter and so probably isn’t especially important to any given chapter’s classification. ‘Nicolas Flamel’, however, is going to appear a reasonable amount in a few chapters but not at all in all the rest. We therefore know that Nicolas Flamel is important in the chapters he does appear in.

Luckily, I don’t have to worry about the implementation of TF-IDF – sklearn has got it.

This gives me a large sparse matrix with the TF-IDF score for each word in each document as the entries. If you pay close attention to the parameters I’ve passed along to TfidfVectorizer as they are ripe for the changing. Firstly, `max_df=0.75` is saying that I don’t care about words that appear in more than 75% of the chapters. `min_df=0.05` is saying that I don’t care about words that appear in fewer than 5% of chapters. You can see I passed along my tokeniser and that I’m using English stop-words (that is, I’m removing the most common English words). Finally, I’m generating n-grams between 1 and 4.

For those uninitiated with n-grams they’re basically a way of splitting text up into handy little chunks. As an example, 3-grams of the following sentence:

“This is not the greatest song in the world”

would be:

“This is not”, “is not the”, “not the greatest”, “the greatest song”, e.t.c.

This allows me to pick out common phrases such as “Snape said” and “wizarding world”. Again, that’s a setting that is begging to be played about with.

Step 5

Performing K-means clustering we get output like so:

Cluster 18 words:

Top words: maxime,madame maxime,karkaroff,madame,hagrid,cedric,moody,krum,champions,tournament

Chapter: the hungarian horntail, Book: gobletOfFire

Chapter: the goblet of fire, Book: gobletOfFire

Chapter: the four champions, Book: gobletOfFire

Chapter: beauxbatons and durmstrang, Book: gobletOfFire

Chapter: the beginning, Book: gobletOfFire

Chapter: the yule ball, Book: gobletOfFire

which is obviously quite a handy little cluster. It’s successfully managed to only take chapters from one book (given that we’ve not allowed K-means access to the book information that is a bit of a triumph). For those aware of Harry Potter you’ll see this is a Triwizard Tournament heavy cluster. Another example:

Cluster 10 words:

Top words: wormtail,cold voice,voldemort,lord,cauldron,riddle,cedric,man,master,faithful

Chapter: the riddle house, Book: gobletOfFire

Chapter: flesh, blood, and bone, Book: gobletOfFire

Chapter: the death eaters, Book: gobletOfFire

Again, all the clusters are from one book – I’m counting that as a result. Added bonus, they’re not sequential chapters! I happen to know (by being a massive Harry Potter geek) that these chapters are towards the start and end of book 4 and focus heavily on Voldemort and Peter Pettigrew.

So far so good and in fact I could stop here but wouldn’t it be nice to visualise those clusters so we can see the topics we’ve picked out graphically? Even if you said no then it doesn’t really matter. I’m still going to do it.

Step 6

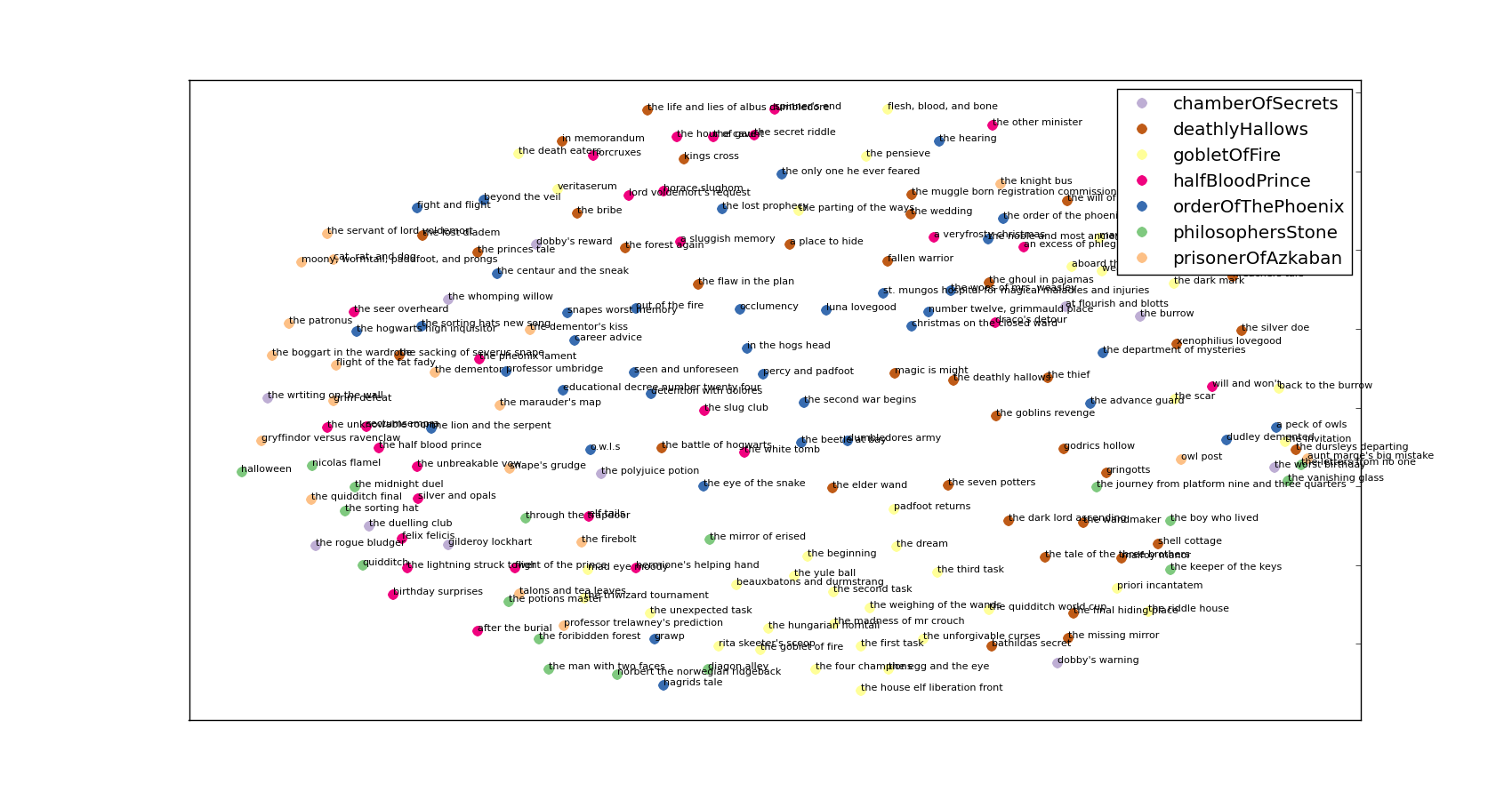

First things first, let’s plot all of the chapters on one graph and colour code them with the book from which they came. It’s not a great way of visualising clusters but it is a great way of seeing how everything is laid out:

A messy picture of all the chapters projected into 2d space.

Again, this section is shamelessly copied from the aforementioned blog but ultimately we’re projecting the cosine differences between the tf-idf matrix terms into 2-dimensional space. I declare a colour dictionary for each of the books and then rattle through the chapters plotting them.

I’m sure you’ll agree that’s far too messy for anybody to really do anything with. If you’re following along with the code you’ll see that next I generate the subplot figure shown at the top.

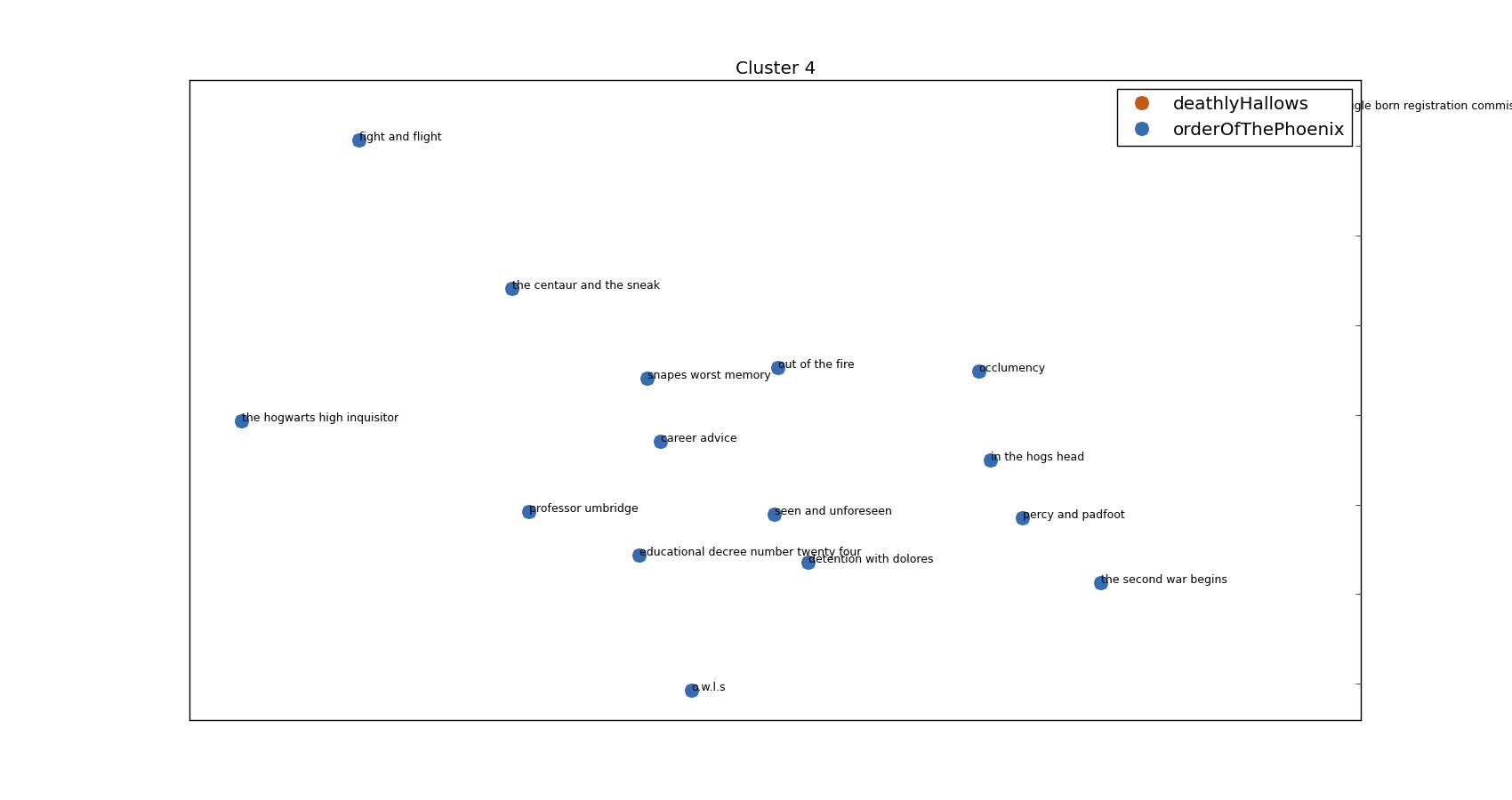

Finally, I create plots for each of the clusters – a few examples of which are:

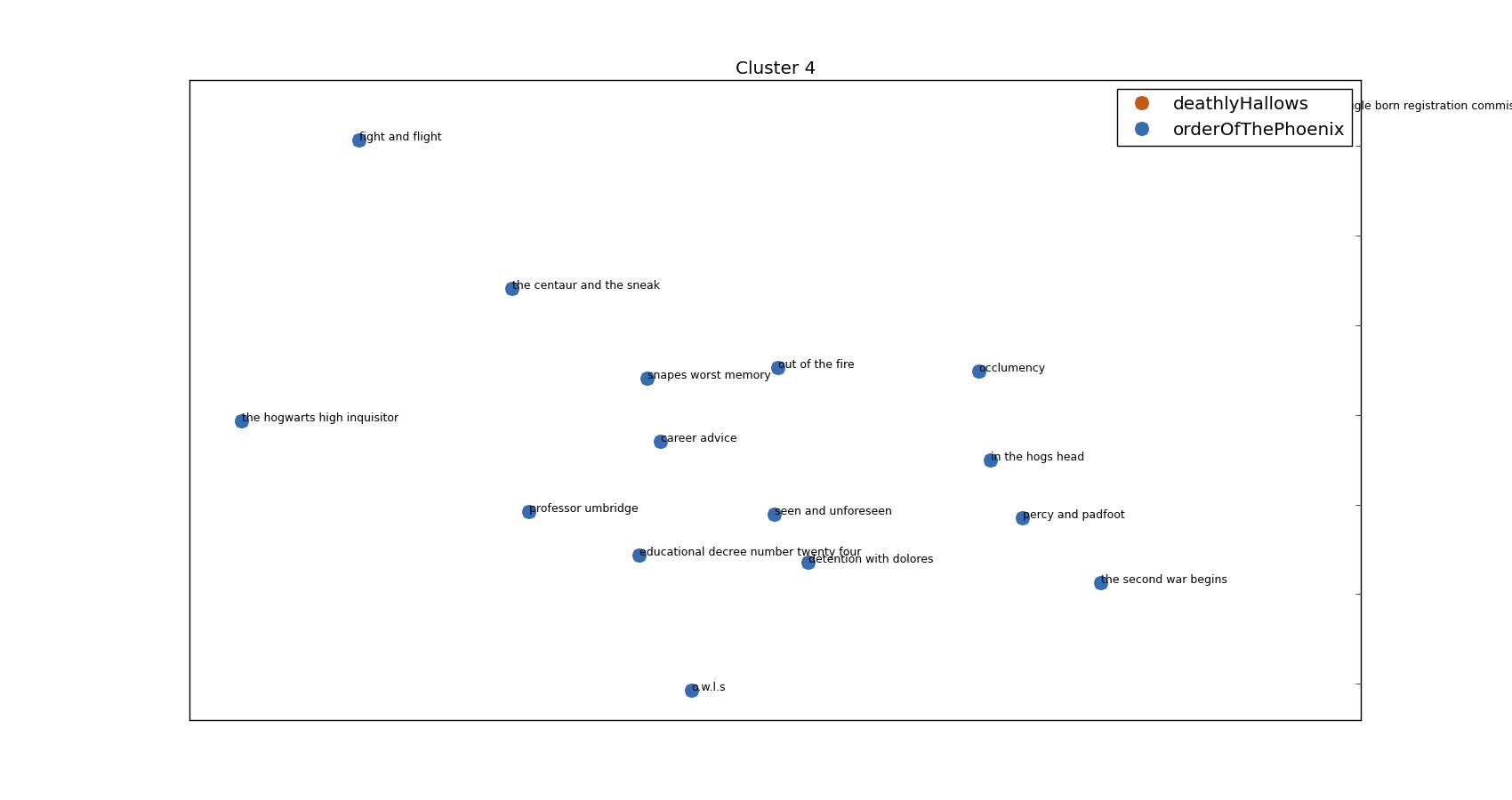

Cluster 3 words:

Top words: umbridge,professor,dont,professor umbridge,snape,sirius,im,said hermione,harrys,youre

Chapter: the muggle born registration commission, Book: deathlyHallows

Chapter: the hogwarts high inquisitor, Book: orderOfThePhoenix

Chapter: o.w.l.s, Book: orderOfThePhoenix

Chapter: the second war begins, Book: orderOfThePhoenix

Chapter: educational decree number twenty four, Book: orderOfThePhoenix

Chapter: the centaur and the sneak, Book: orderOfThePhoenix

Chapter: percy and padfoot, Book: orderOfThePhoenix

Chapter: detention with dolores, Book: orderOfThePhoenix

Chapter: occlumency, Book: orderOfThePhoenix

Chapter: in the hogs head, Book: orderOfThePhoenix

Chapter: out of the fire, Book: orderOfThePhoenix

Chapter: professor umbridge, Book: orderOfThePhoenix

Chapter: fight and flight, Book: orderOfThePhoenix

Chapter: seen and unforeseen, Book: orderOfThePhoenix

Chapter: career advice, Book: orderOfThePhoenix

Chapter: snapes worst memory, Book: orderOfThePhoenix

generates:

Umbridge’s cluster

Everybody’s favourite professor – Dolores Umbridge!

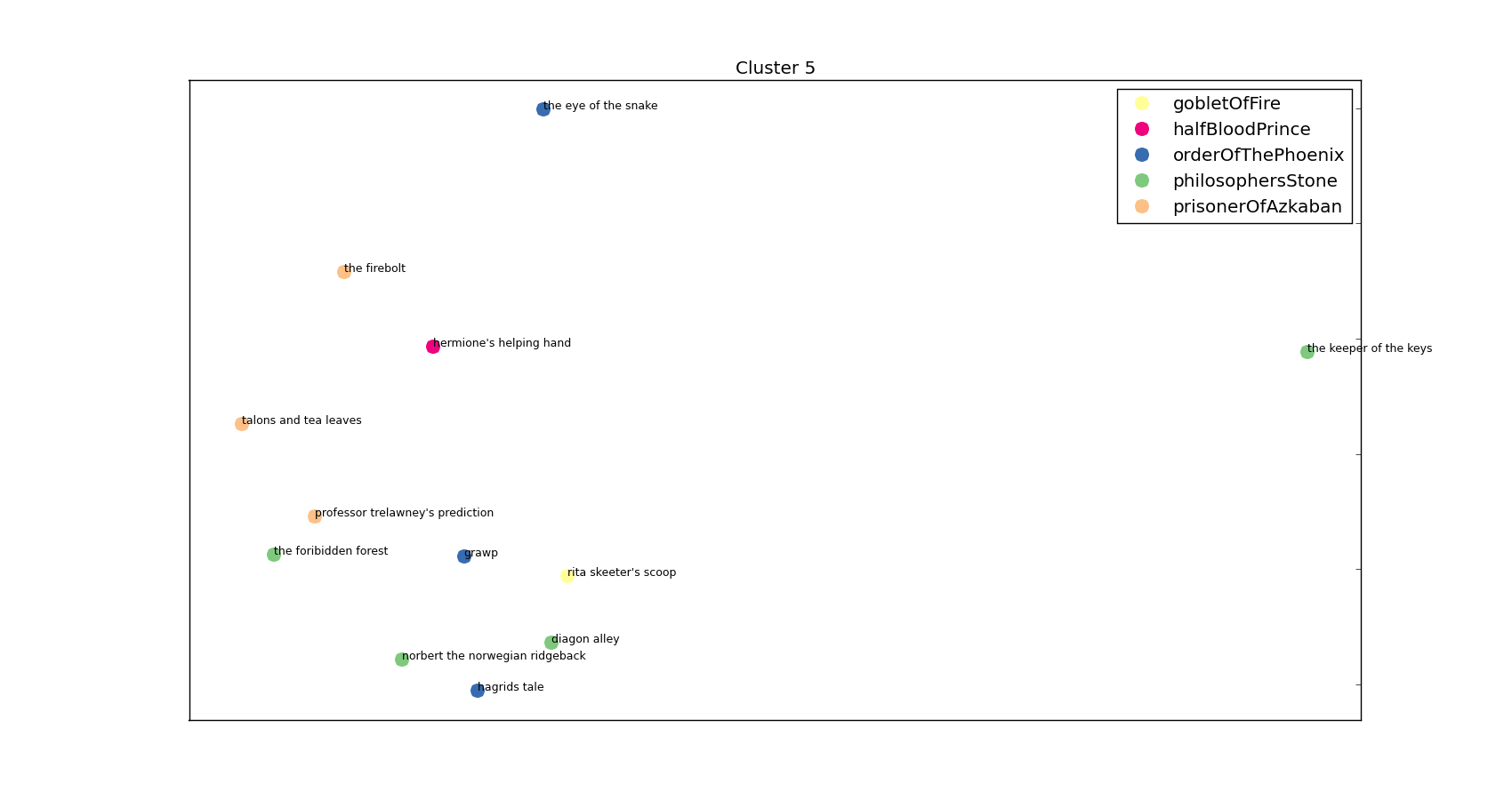

And another:

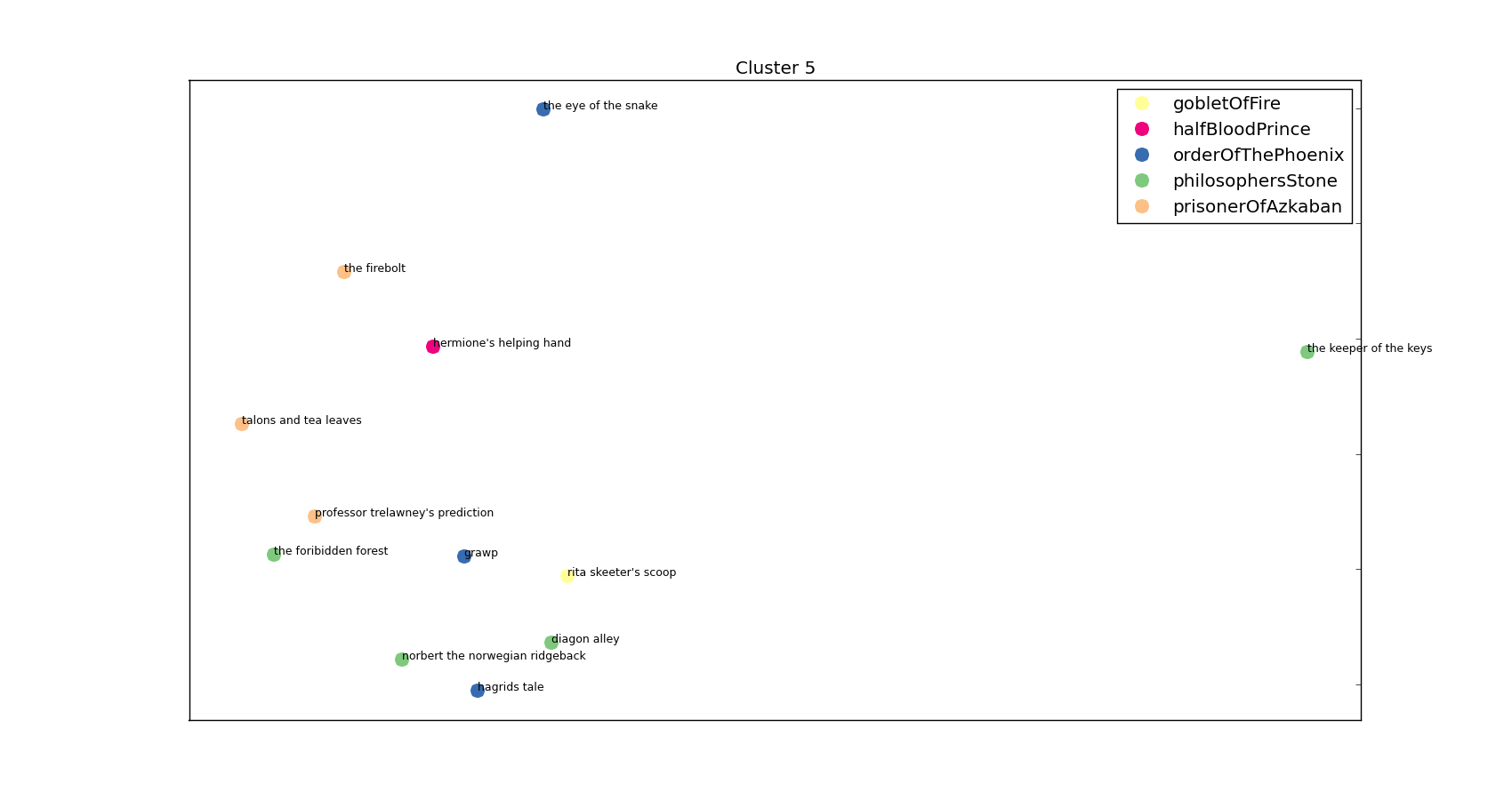

Top words: hagrid,yeh,ter,said hagrid,professor,said hermione,malfoy,o,professor trelawney,trelawney

Chapter: professor trelawney's prediction, Book: prisonerOfAzkaban

Chapter: talons and tea leaves, Book: prisonerOfAzkaban

Chapter: the firebolt, Book: prisonerOfAzkaban

Chapter: diagon alley, Book: philosophersStone

Chapter: the foribidden forest, Book: philosophersStone

Chapter: the keeper of the keys, Book: philosophersStone

Chapter: norbert the norwegian ridgeback, Book: philosophersStone

Chapter: hagrids tale, Book: orderOfThePhoenix

Chapter: the eye of the snake, Book: orderOfThePhoenix

Chapter: grawp, Book: orderOfThePhoenix

Chapter: hermione's helping hand, Book: halfBloodPrince

Chapter: rita skeeter's scoop, Book: gobletOfFire

and visually:

Keeper of the Keys

The anti-Umbridge – it’s Hagrid’s cluster!

I’ll stop on the random copy/pasting of the clusters and stick them all on my Github – I think you’ve got the idea! All in all I’m pretty happy with how this has worked but I am very dependent on individual character names. I tried just looking at 2-grams but it usually just gave me ” said” with a few exceptions (‘wizarding world’, ‘said softly’, ‘death eater’, ‘godrics hollow’). I’ve also put almost zero effort into formatting the images – you know roughly what it’s meant to look like: having the pictures look good is an exercise I’m leaving to the reader’s imagination.

There’s loads more stuff I could do but I’m going to eat a chicken and go swimming so it’ll have to wait.

All was well.